Huawei: Sound of Light

About

The Sound Of Light is an exploration of what’s possible when nature, technology and artistic expression come together to create something truly special. Inspired by Huawei Mate 20 Pro’s AI capabilities we have successfully found a way to transform Northern Lights footage into music, using AI.

Background

How does a telecommunications mogul promote itself beyond traditional media? For the launch of the Huawei Mate 20 Pro phone—a AI-centric device—it had to do exactly that. It had to be tech-forward and future thinking. And let the world know exactly what the company was all about.

Technical Execution

Using artificial intelligence, we managed to translate the Northern Lights into music. With the help of a professional Aurora chaser, we trained the AI to classify auroras and built an app that analyzes each one, generating corresponding music phases. We worked with a best-in-class composer and a live orchestra to create the soundtrack.

Understanding types of auroras

No Computer Vision algorithm knows how to recognise types of auroras. Even people do this subconsciously. But there are different looking auroras. Some mysterious, some magical, some look calm, some look dynamic.

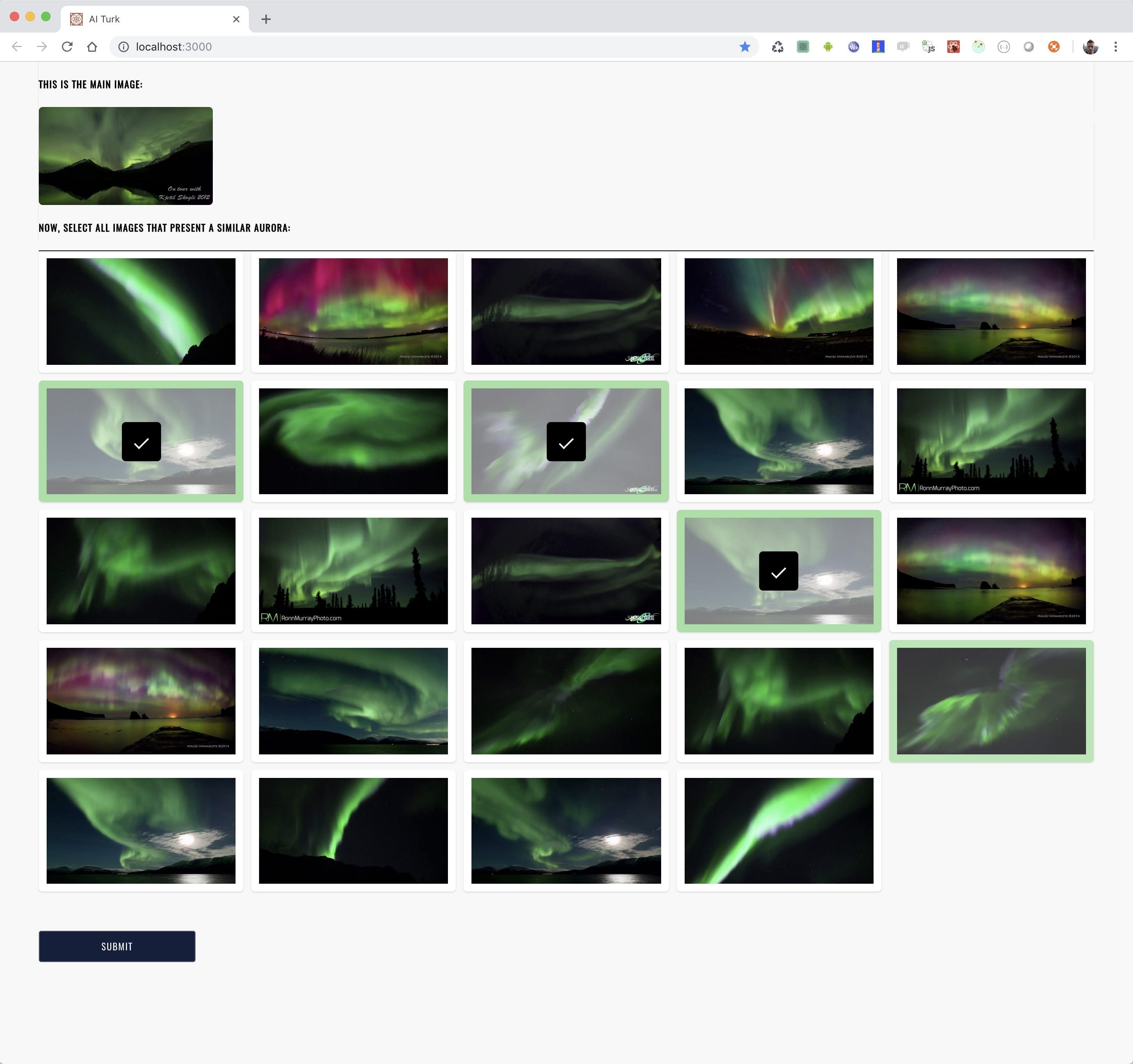

So we started by crowdsourcing people's perception of auroras, asking them to group similarly looking photos of northern lights. We deployed the tool on Amazon Mechanical Turk.

Via data crowdsourcing we obtained over 10,000 data samples within just 24h!

This has allowed us to develop a Machine Learning clustering algorithm using Google Tensorflow that would automatically group tens of thousands of image presenting the norhern lights into buckets of the same "type".

Turning it into music

We have then worked with our Creative Director and our music team to assign matching musical motifs to each of the identified types of auroras.

Next, we have split the final video edit into key frames and, for each of the frames, we have used our trained clustering model to give us 4 most likely aurora types that the given frame represents, along with the similarity score. This also means 4 musical motifs (as each of the aurora types has a musical motif assigned).

But we didn't just stop at playing pre-selected motifs. As we have four and a similarity score for each one, we used the concept of latent spaces and Google Magenta tool to generate completely new audio that is an interpolation based on the similarity to all 4 audio samples.

MIDI modulation

To go even further, we have analysed temporal values of the aurora film edit and used them as signals to modulate the audio. For example, we have used optical flow to track how the aurora actually moves, which was affecting additional sound effects.

Putting it all together

The AI-powered tools, suggestions, generated audio and algorithms were delivered to the composer, who turned it into a proper musical masterpiece.

This is a story of a collaboration between human and machine. Neither is to replace one another. Synergy is the key to success.